How To Make Animated Filters In Processing

Cate left the tech industry and spent a year finding her way back whilst building her passion projection Prove & Hide. She is Manager of Mobile Engineering at Ride, speaks internationally on mobile evolution and engineering culture, co-curates Technically Speaking and is an advisor at Glowforge. Cate doesn't exactly alive in Republic of colombia just she spends a lot of fourth dimension at that place, and has lived and worked in the UK, Australia, Canada, Prc the United States, previously as an engineer at Google, an Extreme Bluish intern at IBM, and a ski instructor. Cate blogs at Accidentally in Code and is @catehstn on Twitter.

A Brilliant Idea (That Wasn't All That Brilliant)

When I was traveling in Red china I often saw series of iv paintings showing the aforementioned place in different seasons. Colour—the cool whites of winter, pale hues of leap, lush greens of summer, and reds and yellows of fall—is what visually differentiates the seasons. Around 2011, I had what I thought was a brilliant idea: I wanted to be able to visualize a photograph series as a series of colors. I thought it would show travel, and progression through the seasons.

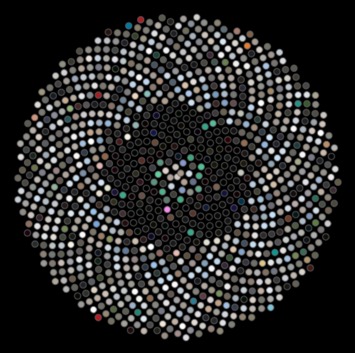

Just I didn't know how to calculate the dominant colour from an image. I thought virtually scaling the paradigm down to a 1x1 square and seeing what was left, but that seemed like cheating. I knew how I wanted to display the images, though: in a layout chosen the Sunflower layout. It's the most efficient style to lay out circles.

I left this projection for years, distracted by work, life, travel, talks. Eventually I returned to it, figured out how to calculate the dominant color, and finished my visualization. That is when I discovered that this idea wasn't, in fact, bright. The progression wasn't as clear equally I hoped, the ascendant color extracted wasn't generally the virtually highly-seasoned shade, the cosmos took a long time (a couple of seconds per image), and it took hundreds of images to brand something cool (Figure xi.1).

Effigy 11.i - Sunflower layout

You might think this would be discouraging, but by the fourth dimension I got to this point I had learned many things that hadn't come up my fashion before — about color spaces and pixel manipulation — and I had started making those cool partially colored images, the kind you find on postcards of London with a red double-decker or telephone booth and everything else in grayscale.

I used a framework chosen Processing because I was familiar with information technology from developing programming curricula, and because I knew it made it piece of cake to create visual applications. It's a tool originally designed for artists, then it abstracts away much of the boilerplate. Information technology allowed me to play and experiment.

University, and subsequently work, filled upward my time with other people's ideas and priorities. Role of finishing this project was learning how to carve out time to make progress on my ain ideas; I required about iv hours of good mental fourth dimension a week. A tool that immune me to motion faster was therefore really helpful, even necessary—although information technology came with its own set of problems, peculiarly around writing tests.

I felt that thorough tests were particularly of import for validating how the projection was working, and for making it easier to pick up and resume a projection that was often on water ice for weeks, fifty-fifty months at a time. Tests (and blogposts!) formed the documentation for this project. I could leave failing tests to document what should happen that I hadn't figured out nonetheless, and make changes with confidence that if I changed something that I had forgotten was critical, the tests would remind me.

This chapter will cover some details nigh Processing and talk y'all through color spaces, decomposing an image into pixels and manipulating them, and unit of measurement testing something that wasn't designed with testing in mind. But I promise it will as well prompt you to brand some progress on whatever idea you haven't made fourth dimension for lately; even if your idea turns out to be as terrible every bit mine was, yous may make something cool and acquire something fascinating in the process.

The App

This chapter will show yous how to create an image filter application that yous can use to dispense your digital images using filters that you create. Nosotros'll use Processing, a programming language and development environment congenital in Coffee. We'll cover setting upward the application in Processing, some of the features of Processing, aspects of colour representation, and how to create color filters (mimicking what was used in old-fashioned photography). We'll also create a special kind of filter that can merely exist done digitally: determining the ascendant hue of an image and showing or hiding it, to create eerie partially colored images.

Finally, we'll add together a thorough exam suite, and cover how to handle some of the limitations of Processing when information technology comes to testability.

Background

Today nosotros tin take a photo, manipulate it, and share information technology with all our friends in a affair of seconds. However, a long long time ago (in digital terms), it was a procedure that took weeks.

In the old days, we would take the motion picture, and then when nosotros had used a whole gyre of motion-picture show, we would take it in to be adult (often at the pharmacy). We'd selection upwards the developed pictures some days later—and discover that in that location was something incorrect with many of them. Mitt not steady enough? Random person or matter that we didn't notice at the time? Overexposed? Underexposed? Of course by then it was too late to remedy the problem.

The process that turned the film into pictures was 1 that most people didn't understand. Light was a problem, so yous had to be careful with the film. In that location was a process, involving darkened rooms and chemicals, that they sometimes showed in films or on Television.

But probably even fewer people understand how we get from the indicate-and-click on our smartphone camera to an paradigm on Instagram. There are really many similarities.

Photographs, the One-time Way

Photographs are created by the effect of light on a lite-sensitive surface. Photographic film is covered in silver halide crystals. (Extra layers are used to create color photographs — for simplicity let's just stick to blackness-and-white photography hither.)

When talking an onetime-fashioned photograph — with film — the light hits the film co-ordinate to what you're pointing at, and the crystals at those points are changed in varying degrees, according to the amount of low-cal. Then, the development process converts the silvery salts to metallic silver, creating the negative. The negative has the light and dark areas of the image inverted. In one case the negatives accept been developed, in that location is another series of steps to reverse the image and print it.

Photographs, the Digital Way

When taking pictures using our smartphones or digital cameras, there is no film. There is something called an active-pixel sensor which functions in a similar way. Where we used to have silver crystals, now we accept pixels — tiny squares. (In fact, pixel is short for "picture element".) Digital images are fabricated upwards of pixels, and the higher the resolution the more than pixels there are. This is why low-resolution images are described as "pixelated" — y'all can beginning to see the squares. These pixels are stored in an array, with the number in each assortment "box" containing the colour.

In Effigy 11.2, we meet a high-resolution moving picture of some blow-upwards animals taken at MoMA in NYC. Figure eleven.three is the aforementioned image blown up, but with just 24 10 32 pixels.

Figure 11.2 - Blow-upwards animals at MoMA NY

![]()

Effigy 11.3 - Blow-up animals, blown up

See how it's and then blurry? We call that pixelation, which means the paradigm is too large for the number of pixels it contains and the squares become visible. Here we can apply it to get a better sense of an image beingness made up of squares of color.

What do these pixels look like? If we print out the colors of some of the pixels in the middle (10,ten to 10,fourteen) using the handy Integer.toHexString in Java, we get hex colors:

FFE8B1 FFFAC4 FFFCC3 FFFCC2 FFF5B7 Hex colors are six characters long. The first two are the red value, the 2d two the greenish value, and the 3rd two the blue value. Sometimes there are an extra ii characters which are the alpha value. In this case FFFAC4 means:

- carmine = FF (hex) = 255 (base 10)

- green = FA (hex) = 250 (base 10)

- blue = C4 (hex) = 196 (base 10)

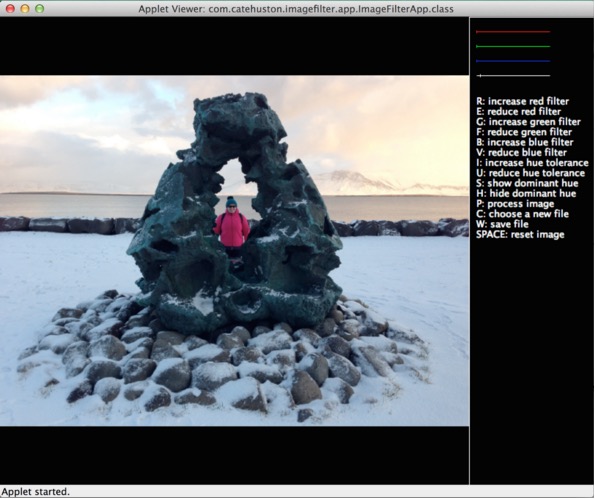

Running the App

In Figure 11.4, we have a moving picture of our app running. Information technology's very much developer-designed, I know, but we merely have 500 lines of Java to piece of work with so something had to suffer! You can meet the list of commands on the correct. Some things we can do:

- Adjust the RGB filters.

- Suit the "hue tolerance".

- Set the dominant hue filters, to either show or hide the dominant hue.

- Apply our electric current setting (information technology is infeasible to run this every central press).

- Reset the epitome.

- Salve the paradigm nosotros have made.

Figure eleven.iv - The App

Processing makes it elementary to create a niggling application and do paradigm manipulation; it has a very visual focus. We'll work with the Java-based version, although Processing has now been ported to other languages.

For this tutorial, I use Processing in Eclipse past adding cadre.jar to my build path. If yous desire, yous tin can utilise the Processing IDE, which removes the demand for a lot of boilerplate Java code. If you lot later want to port it over to Processing.js and upload it online, you demand to replace the file chooser with something else.

There are detailed instructions with screenshots in the project's repository. If you are familiar with Eclipse and Coffee already you may not demand them.

Processing Nuts

Size and Colour

We don't desire our app to be a tiny grey window, so the 2 essential methods that we volition start by overriding are setup(), and draw(). The setup() method is only chosen when the app starts, and is where we do things similar prepare the size of the app window. The draw() method is called for every animation, or afterward some action tin can be triggered past calling redraw(). (As covered in the Processing Documentation, draw() should not be called explicitly.)

Processing is designed to work nicely to create animated sketches, but in this case nosotros don't desire animationi, nosotros want to respond to key presses. To prevent animation (which would be a elevate on functioning) we volition telephone call noLoop() from setup. This means that draw() volition only be chosen immediately after setup(), and whenever nosotros call redraw().

private static final int WIDTH = 360; private static final int HEIGHT = 240; public void setup() { noLoop(); // Ready upward the view. size(WIDTH, Superlative); background(0); } public void describe() { groundwork(0); } These don't really practise much however, merely effort running the app again, adjusting the constants in WIDTH and Superlative, to encounter different sizes.

background(0) specifies a black background. Try changing the number passed to background() and see what happens — information technology's the alpha value, and then if you lot only laissez passer i number in, information technology is ever greyscale. Alternatively, y'all can call background(int r, int g, int b).

PImage

The PImage object is the Processing object that represents an image. We're going to be using this a lot, so information technology's worth reading through the documentation. It has three fields (Table 11.1) as well as some methods that we will use (Tabular array 11.2).

pixels[] | Array containing the colour of every pixel in the paradigm |

width | Image width in pixels |

acme | Prototype height in pixels |

: Table 11.ane - PImage fields

loadPixels | Loads the pixel data for the image into its pixels[] array |

updatePixels | Updates the image with the data in its pixels[] array |

resize | Changes the size of an image to a new width and height |

get | Reads the color of whatever pixel or grabs a rectangle of pixels |

set | Writes a color to any pixel or writes an image into some other |

save | Saves the image to a TIFF, TARGA, PNG, or JPEG file |

: Table xi.2 - PImage methods

File Chooser

Processing handles most of the file choosing process; we simply need to telephone call selectInput(), and implement a callback (which must exist public).

To people familiar with Coffee this might seem odd; a listener or a lambda expression might make more sense. However, equally Processing was developed as a tool for artists, for the almost function these things have been abstracted away by the language to proceed it unintimidating. This is a choice the designers made: to prioritize simplicity and approachability over power and flexibility. If yous use the stripped-down Processing editor, rather than Processing every bit a library in Eclipse, yous don't fifty-fifty need to ascertain grade names.

Other language designers with different target audiences make different choices, as they should. For instance, in Haskell, a purely functional linguistic communication, purity of functional linguistic communication paradigms is prioritised over everything else. This makes it a ameliorate tool for mathematical problems than for anything requiring IO.

// Called on key press. individual void chooseFile() { // Choose the file. selectInput("Select a file to procedure:", "fileSelected"); } public void fileSelected(File file) { if (file == aught) { println("User hit cancel."); } else { // save the image redraw(); // update the brandish } } Responding to Key Presses

Normally in Java, responding to key presses requires adding listeners and implementing bearding functions. However, as with the file chooser, Processing handles a lot of this for u.s.a.. We simply need to implement keyPressed().

public void keyPressed() { print("key pressed: " + key); } If you run the app over again, every time you printing a central it will output it to the panel. Later, you'll want to exercise unlike things depending on what key was pressed, and to practise this you lot merely switch on the key value. (This exists in the PApplet superclass, and contains the final key pressed.)

Writing Tests

This app doesn't practise a lot yet, only we can already run across number of places where things can go incorrect; for example, triggering the wrong action with key presses. Every bit we add complexity, nosotros add together more potential problems, such as updating the image country incorrectly, or miscalculating pixel colors after applying a filter. I besides merely enjoy (some think weirdly) writing unit tests. Whilst some people seem to call up of testing as a matter that delays checking code in, I see tests as my #i debugging tool, and equally an opportunity to deeply understand what is going on in my lawmaking.

I adore Processing, but information technology'south designed to create visual applications, and in this area maybe unit testing isn't a huge business. It's clear it isn't written for testability; in fact it'south written in such a way that makes it untestable, as is. Part of this is considering it hides complication, and some of that subconscious complexity is really useful in writing unit tests. The use of static and final methods make information technology much harder to utilise mocks (objects that record interaction and let you to fake part of your system to verify some other function is behaving correctly), which rely on the ability to bracket.

We might kickoff a greenfield project with great intentions to practise Test Driven Development (TDD) and achieve perfect exam coverage, but in reality we are usually looking at a mass of lawmaking written by various and assorted people and trying to figure out what information technology is supposed to exist doing, and how and why it is going wrong. And so maybe nosotros don't write perfect tests, just writing tests at all volition help us navigate the situation, document what is happening and move forward.

We create "seams" that allow the states to break something up from its amorphous mass of tangled pieces and verify it in parts. To do this, nosotros volition sometimes create wrapper classes that can be mocked. These classes do nothing more hold a drove of similar methods, or forward calls on to another object that cannot be mocked (due to concluding or static methods), and as such they are very deadening to write, but key to creating seams and making the lawmaking testable.

I used JUnit for tests, every bit I was working in Java with Processing as a library. For mocking I used Mockito. Yous can download Mockito and add together the JAR to your buildpath in the same way yous added core.jar. I created 2 helper classes that brand it possible to mock and test the app (otherwise we can't test behavior involving PImage or PApplet methods).

IFAImage is a sparse wrapper around PImage. PixelColorHelper is a wrapper effectually applet pixel color methods. These wrappers telephone call the final, and static methods, only the caller methods are neither concluding nor static themselves — this allows them to be mocked. These are deliberately lightweight, and nosotros could have gone further, merely this was sufficient to accost the major trouble of testability when using Processing — static, and terminal methods. The goal was to make an app, after all — not a unit testing framework for Processing!

A class called ImageState forms the "model" of this awarding, removing as much logic from the grade extending PApplet as possible, for amend testability. It too makes for a cleaner design and separation of concerns: the App controls the interactions and the UI, not the prototype manipulation.

Do-Information technology-Yourself Filters

RGB Filters

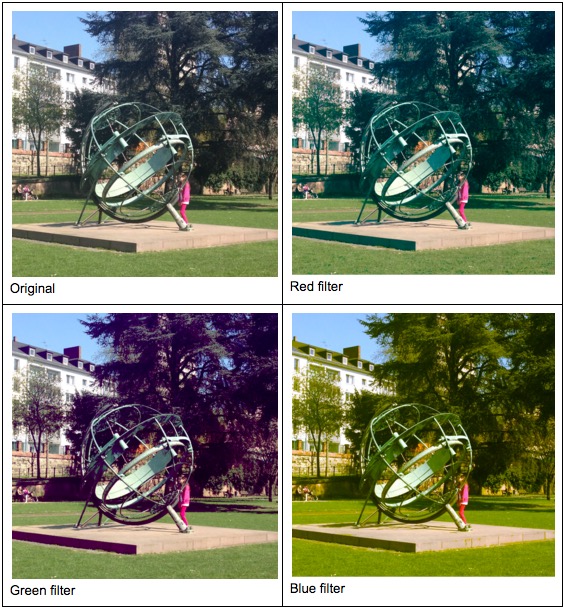

Before nosotros offset writing more complicated pixel processing, we can get-go with a curt exercise that will get u.s.a. comfortable doing pixel manipulation. We'll create standard (red, green, blue) color filters that will allow us to create the same effect as placing a colored plate over the lens of a camera, just letting through low-cal with enough ruddy (or green, or blue).

By applying different filters to this epitome Effigy 11.five (taken on a jump trip to Frankfurt) it's almost like the seasons are different. (Remember the four-seasons paintings we imagined earlier?) Meet how much more green the tree becomes when the crimson filter is applied.

Figure 11.5 - Four (Fake) Seasons in Frankfurt

How practise we do information technology?

-

Set the filter. (You can combine red, light-green and bluish filters as in the image earlier; I haven't in these examples so that the effect is clearer.)

-

For each pixel in the epitome, check its RGB value.

- If the red is less than the cerise filter, set the red to zilch.

- If the light-green is less than the green filter, set the green to goose egg.

- If the blue is less than the blueish filter, set up the blue to zero.

-

Any pixel with bereft of all of these colors will exist blackness.

Although our image is 2-dimensional, the pixels live in a one-dimensional assortment starting peak-left and moving left to correct, top to lesser. The array indices for a 4x4 image are shown hither:

| 0 | 1 | two | 3 |

| 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | eleven |

| 12 | thirteen | 14 | 15 |

: Table xi.3 - Pixel indices for a 4x4 prototype

public void applyColorFilter(PApplet applet, IFAImage img, int minRed, int minGreen, int minBlue, int colorRange) { img.loadPixels(); int numberOfPixels = img.getPixels().length; for (int i = 0; i < numberOfPixels; i++) { int pixel = img.getPixel(i); float alpha = pixelColorHelper.alpha(applet, pixel); float reddish = pixelColorHelper.blood-red(applet, pixel); float green = pixelColorHelper.green(applet, pixel); float blueish = pixelColorHelper.blueish(applet, pixel); red = (red >= minRed) ? red : 0; green = (dark-green >= minGreen) ? green : 0; blueish = (blue >= minBlue) ? blueish : 0; image.setPixel(i, pixelColorHelper.colour(applet, red, green, blue, alpha)); } } Color

Every bit our first case of an image filter showed, the concept and representation of colors in a program is very important to agreement how our filters piece of work. To set up ourselves for working on our adjacent filter, let's explore the concept of color a bit more.

We were using a concept in the previous section called "color infinite", which is way of representing color digitally. Kids mixing paints learn that colors can be fabricated from other colors; things work slightly differently in digital (less chance of being covered in paint!) but similar. Processing makes it really easy to piece of work with whatever color space you want, but you need to know which one to pick, and so it's of import to empathize how they work.

RGB colors

The color infinite that most programmers are familiar with is RGBA: ruby, greenish, bluish and alpha; it'due south what nosotros were using in a higher place. In hexadecimal (base 16), the start two digits are the amount of cherry, the second ii blue, the 3rd 2 dark-green, and the terminal two (if they are at that place) are the alpha value. The values range from 00 in base 16 (0 in base of operations 10) through to FF (255 in base 10). The alpha represents opacity, where 0 is transparent and 100% is opaque.

HSB or HSV colors

This colour space is non quite likewise known as RGB. The first number represents the hue, the second number the saturation (how intense the color is), and the tertiary number the brightness. The HSB colour space can be represented past a cone: The hue is the position effectually the cone, saturation the distance from the centre, and effulgence the height (0 brightness is black).

Now that we're comfortable with pixel manipulation, let'south do something that we could only do digitally. Digitally, we can manipulate the image in a way that isn't so uniform.

When I look through my stream of pictures I can see themes emerging. The nighttime series I took at sunset from a gunkhole on Hong Kong harbour, the gray of Democratic people's republic of korea, the lush greens of Bali, the icy whites and pale blues of an Icelandic wintertime. Can we take a picture and pull out that main color that dominates the scene?

It makes sense to use the HSB color space for this — we are interested in the hue when figuring out what the main colour is. It's possible to do this using RGB values, but more difficult (we would have to compare all iii values) and it would be more sensitive to darkness. Nosotros can modify to the HSB color space using colorMode.

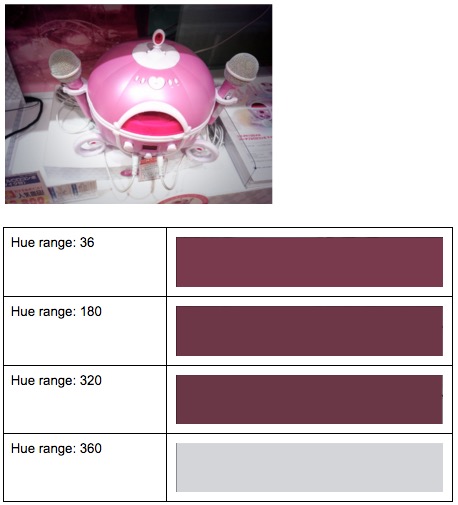

Having settled on this colour infinite, it's simpler than it would have been using RGB. We demand to find the hue of each pixel, and figure out which is nearly "popular". We probably don't want to exist exact — we want to grouping very similar hues together, and nosotros can handle this using two strategies.

Firstly we will round the decimals that come back to whole numbers, as this makes it unproblematic to determine which "bucket" we put each pixel in. Secondly we can alter the range of the hues. If nosotros call up back to the cone representation to a higher place, nosotros might think of hues every bit having 360 degrees (like a circle). Processing uses 255 past default, which is the same as is typical for RGB (255 is FF in hexadecimal). The higher the range we use, the more than distinct the hues in the picture will be. Using a smaller range will allow united states to group together like hues. Using a 360 degree range, it's unlikely that nosotros volition exist able to tell the difference between a hue of 224 and a hue of 225, as the difference is very small. If we make the range one-tertiary of that, 120, both these hues become 75 after rounding.

Nosotros tin modify the range of hues using colorMode. If nosotros call colorMode(HSB, 120) nosotros take but made our hue detection a bit less than half as verbal equally if we used the 255 range. We too know that our hues will fall into 120 "buckets", so we can simply get through our image, get the hue for a pixel, and add together one to the corresponding count in an array. This will be \(O(n)\), where \(due north\) is the number of pixels, as it requires action on each one.

for(int px in pixels) { int hue = Math.round(hue(px)); hues[hue]++; } At the end we tin can print this hue to the screen, or display it side by side to the motion-picture show (Figure xi.6).

Figure 11.6 - Dominant hue versus size of range (number of buckets) used

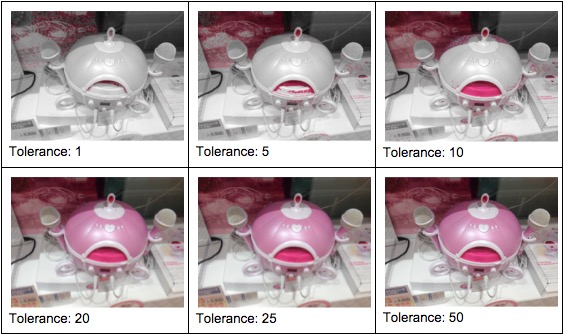

Once nosotros've extracted the "dominant" hue, we tin choose to either show or hide it in the epitome. We can show the dominant hue with varying tolerance (ranges effectually information technology that nosotros will accept). Pixels that don't fall into this range can be changed to grayscale by setting the value based on the brightness. Figure 11.vii shows the dominant hue determined using a range of 240, and with varying tolerance. The tolerance is the corporeality either side of the almost popular hue that gets grouped together.

Effigy 11.7 - Showing dominant hue

Alternatively, nosotros can hide the ascendant hue. In Effigy 11.eight, the images are transposed side by side: the original in the middle, on the left the dominant hue (the dark-brown colour of the path) is shown, and on the right the dominant hue is hidden (range 320, tolerance 20).

Figure 11.8 - Hiding dominant hue

Each epitome requires a double pass (looking at each pixel twice), so on images with a large number of pixels it can take a noticeable amount of time.

public HSBColor getDominantHue(PApplet applet, IFAImage image, int hueRange) { paradigm.loadPixels(); int numberOfPixels = image.getPixels().length; int[] hues = new int[hueRange]; float[] saturations = new float[hueRange]; float[] brightnesses = new bladder[hueRange]; for (int i = 0; i < numberOfPixels; i++) { int pixel = image.getPixel(i); int hue = Math.round(pixelColorHelper.hue(applet, pixel)); float saturation = pixelColorHelper.saturation(applet, pixel); float brightness = pixelColorHelper.brightness(applet, pixel); hues[hue]++; saturations[hue] += saturation; brightnesses[hue] += effulgence; } // Find the most common hue. int hueCount = hues[0]; int hue = 0; for (int i = i; i < hues.length; i++) { if (hues[i] > hueCount) { hueCount = hues[i]; hue = i; } } // Render the color to brandish. float south = saturations[hue] / hueCount; float b = brightnesses[hue] / hueCount; return new HSBColor(hue, s, b); } public void processImageForHue(PApplet applet, IFAImage image, int hueRange, int hueTolerance, boolean showHue) { applet.colorMode(PApplet.HSB, (hueRange - 1)); image.loadPixels(); int numberOfPixels = paradigm.getPixels().length; HSBColor dominantHue = getDominantHue(applet, image, hueRange); // Manipulate photo, grayscale whatever pixel that isn't close to that hue. float lower = dominantHue.h - hueTolerance; float upper = dominantHue.h + hueTolerance; for (int i = 0; i < numberOfPixels; i++) { int pixel = image.getPixel(i); float hue = pixelColorHelper.hue(applet, pixel); if (hueInRange(hue, hueRange, lower, upper) == showHue) { float brightness = pixelColorHelper.effulgence(applet, pixel); paradigm.setPixel(i, pixelColorHelper.color(applet, brightness)); } } image.updatePixels(); } Combining Filters

With the UI as information technology is, the user can combine the ruby-red, green, and blue filters together. If they combine the dominant hue filters with the red, dark-green, and bluish filters the results can sometimes be a little unexpected, because of changing the colour spaces.

Processing has some built-in methods that support the manipulation of images; for instance, invert and mistiness.

To achieve effects like sharpening, blurring, or sepia we apply matrices. For every pixel of the paradigm, accept the sum of products where each product is the color value of the current pixel or a neighbor of it, with the corresponding value of the filter matrix. There are some special matrices of specific values that acuminate images.

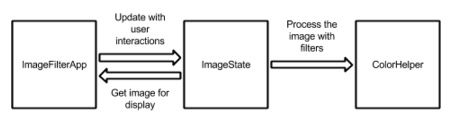

Architecture

There are 3 main components to the app (Figure 11.ix).

The App

The app consists of one file: ImageFilterApp.java. This extends PApplet (the Processing app superclass) and handles layout, user interaction, etc. This class is the hardest to test, so we want to proceed it as small as possible.

Model

Model consists of 3 files: HSBColor.java is a simple container for HSB colors (consisting of hue, saturation, and effulgence). IFAImage is a wrapper effectually PImage for testability. (PImage contains a number of concluding methods which cannot be mocked.) Finally, ImageState.coffee is the object which describes the state of the epitome — what level of filters should be applied, and which filters — and handles loading the epitome. (Notation: The image needs to be reloaded whenever color filters are adjusted downward, and whenever the dominant hue is recalculated. For clarity, we just reload each time the epitome is processed.)

Color

Color consists of 2 files: ColorHelper.java is where all the image processing and filtering takes place, and PixelColorHelper.java abstracts out final PApplet methods for pixel colors for testability.

Effigy 11.9 - Compages diagram

Wrapper Classes and Tests

Briefly mentioned to a higher place, there are two wrapper classes (IFAImage and PixelColorHelper) that wrap library methods for testability. This is because, in Java, the keyword "last" indicates a method that cannot be overridden or subconscious past subclasses, which means they cannot exist mocked.

PixelColorHelper wraps methods on the applet. This means nosotros need to laissez passer the applet in to each method call. (Alternatively, we could make it a field and set it on initialization.)

package com.catehuston.imagefilter.color; import processing.core.PApplet; public class PixelColorHelper { public bladder alpha(PApplet applet, int pixel) { return applet.blastoff(pixel); } public float blue(PApplet applet, int pixel) { return applet.blue(pixel); } public float brightness(PApplet applet, int pixel) { render applet.effulgence(pixel); } public int color(PApplet applet, float greyscale) { return applet.color(greyscale); } public int color(PApplet applet, float ruby-red, float green, float blue, float alpha) { render applet.color(red, light-green, blue, alpha); } public bladder dark-green(PApplet applet, int pixel) { return applet.green(pixel); } public float hue(PApplet applet, int pixel) { render applet.hue(pixel); } public bladder red(PApplet applet, int pixel) { return applet.red(pixel); } public float saturation(PApplet applet, int pixel) { render applet.saturation(pixel); } } IFAImage is a wrapper effectually PImage, so in our app we don't initialize a PImage, only rather an IFAImage — although we practise accept to expose the PImage so that it tin can exist rendered.

parcel com.catehuston.imagefilter.model; import processing.core.PApplet; import processing.cadre.PImage; public course IFAImage { private PImage image; public IFAImage() { image = null; } public PImage image() { render epitome; } public void update(PApplet applet, String filepath) { epitome = nil; image = applet.loadImage(filepath); } // Wrapped methods from PImage. public int getHeight() { return image.height; } public int getPixel(int px) { return image.pixels[px]; } public int[] getPixels() { return image.pixels; } public int getWidth() { return image.width; } public void loadPixels() { image.loadPixels(); } public void resize(int width, int top) { epitome.resize(width, pinnacle); } public void save(String filepath) { image.save(filepath); } public void setPixel(int px, int color) { image.pixels[px] = color; } public void updatePixels() { paradigm.updatePixels(); } } Finally, we have our uncomplicated container course, HSBColor. Note that it is immutable (once created, it cannot be changed). Immutable objects are better for thread safety (something we have no need of here!) just are also easier to sympathize and reason about. In general, I tend to make simple model classes immutable unless I detect a proficient reason for them non to exist.

Some of you lot may know that there are already classes representing color in Processing and in Java itself. Without going too much into the details of these, both of them are more than focused on RGB color, and the Java class in detail adds way more than complexity than nosotros need. We would probably be okay if we did want to use Java'south awt.Color; yet awt GUI components cannot be used in Processing, so for our purposes creating this simple container form to concur these bits of information we need is easiest.

package com.catehuston.imagefilter.model; public class HSBColor { public final float h; public final float s; public terminal float b; public HSBColor(float h, float s, float b) { this.h = h; this.s = south; this.b = b; } } ColorHelper and Associated Tests

ColorHelper is where all the epitome manipulation lives. The methods in this grade could be static if not for needing a PixelColorHelper. (Although we won't get into the fence almost the claim of static methods here.)

package com.catehuston.imagefilter.color; import processing.core.PApplet; import com.catehuston.imagefilter.model.HSBColor; import com.catehuston.imagefilter.model.IFAImage; public course ColorHelper { private concluding PixelColorHelper pixelColorHelper; public ColorHelper(PixelColorHelper pixelColorHelper) { this.pixelColorHelper = pixelColorHelper; } public boolean hueInRange(float hue, int hueRange, bladder lower, float upper) { // Need to recoup for it being circular - can become around. if (lower < 0) { lower += hueRange; } if (upper > hueRange) { upper -= hueRange; } if (lower < upper) { render hue < upper && hue > lower; } else { return hue < upper || hue > lower; } } public HSBColor getDominantHue(PApplet applet, IFAImage image, int hueRange) { image.loadPixels(); int numberOfPixels = prototype.getPixels().length; int[] hues = new int[hueRange]; float[] saturations = new float[hueRange]; float[] brightnesses = new bladder[hueRange]; for (int i = 0; i < numberOfPixels; i++) { int pixel = image.getPixel(i); int hue = Math.round(pixelColorHelper.hue(applet, pixel)); float saturation = pixelColorHelper.saturation(applet, pixel); bladder brightness = pixelColorHelper.brightness(applet, pixel); hues[hue]++; saturations[hue] += saturation; brightnesses[hue] += brightness; } // Find the near common hue. int hueCount = hues[0]; int hue = 0; for (int i = one; i < hues.length; i++) { if (hues[i] > hueCount) { hueCount = hues[i]; hue = i; } } // Return the color to display. float s = saturations[hue] / hueCount; bladder b = brightnesses[hue] / hueCount; return new HSBColor(hue, due south, b); } public void processImageForHue(PApplet applet, IFAImage prototype, int hueRange, int hueTolerance, boolean showHue) { applet.colorMode(PApplet.HSB, (hueRange - i)); image.loadPixels(); int numberOfPixels = image.getPixels().length; HSBColor dominantHue = getDominantHue(applet, image, hueRange); // Manipulate photo, grayscale whatever pixel that isn't close to that hue. float lower = dominantHue.h - hueTolerance; float upper = dominantHue.h + hueTolerance; for (int i = 0; i < numberOfPixels; i++) { int pixel = image.getPixel(i); float hue = pixelColorHelper.hue(applet, pixel); if (hueInRange(hue, hueRange, lower, upper) == showHue) { float brightness = pixelColorHelper.brightness(applet, pixel); image.setPixel(i, pixelColorHelper.color(applet, effulgence)); } } image.updatePixels(); } public void applyColorFilter(PApplet applet, IFAImage prototype, int minRed, int minGreen, int minBlue, int colorRange) { applet.colorMode(PApplet.RGB, colorRange); paradigm.loadPixels(); int numberOfPixels = prototype.getPixels().length; for (int i = 0; i < numberOfPixels; i++) { int pixel = image.getPixel(i); bladder blastoff = pixelColorHelper.alpha(applet, pixel); float red = pixelColorHelper.blood-red(applet, pixel); float green = pixelColorHelper.green(applet, pixel); float blue = pixelColorHelper.blue(applet, pixel); red = (red >= minRed) ? crimson : 0; green = (greenish >= minGreen) ? green : 0; blue = (blue >= minBlue) ? blue : 0; epitome.setPixel(i, pixelColorHelper.colour(applet, cherry, dark-green, blue, alpha)); } } } We don't want to test this with whole images, because nosotros desire images that we know the properties of and reason about. We approximate this by mocking the images and making them render an array of pixels — in this example, 5. This allows us to verify that the behavior is as expected. Before nosotros covered the concept of mock objects, and hither we see their use. We are using Mockito every bit our mock object framework.

To create a mock we employ the @Mock notation on an instance variable, and it volition be mocked at runtime by the MockitoJUnitRunner.

To stub (set the behavior of) a method, we apply:

when(mock.methodCall()).thenReturn(value) To verify a method was called, we use verify(mock.methodCall()).

We'll show a few example test cases here; if you lot'd like to see the rest, visit the source binder for this projection in the 500 Lines or Less GitHub repository.

parcel com.catehuston.imagefilter.color; /* ... Imports omitted ... */ @RunWith(MockitoJUnitRunner.course) public class ColorHelperTest { @Mock PApplet applet; @Mock IFAImage image; @Mock PixelColorHelper pixelColorHelper; ColorHelper colorHelper; private static last int px1 = g; private static final int px2 = 1010; individual static final int px3 = 1030; private static final int px4 = 1040; private static final int px5 = 1050; individual static final int[] pixels = { px1, px2, px3, px4, px5 }; @Before public void setUp() throws Exception { colorHelper = new ColorHelper(pixelColorHelper); when(image.getPixels()).thenReturn(pixels); setHsbValuesForPixel(0, px1, 30F, 5F, 10F); setHsbValuesForPixel(1, px2, 20F, 6F, 11F); setHsbValuesForPixel(2, px3, 30F, 7F, 12F); setHsbValuesForPixel(iii, px4, 50F, 8F, 13F); setHsbValuesForPixel(4, px5, 30F, 9F, 14F); } private void setHsbValuesForPixel(int px, int color, bladder h, float south, bladder b) { when(image.getPixel(px)).thenReturn(color); when(pixelColorHelper.hue(applet, color)).thenReturn(h); when(pixelColorHelper.saturation(applet, color)).thenReturn(southward); when(pixelColorHelper.brightness(applet, color)).thenReturn(b); } private void setRgbValuesForPixel(int px, int colour, float r, float g, float b, float blastoff) { when(paradigm.getPixel(px)).thenReturn(color); when(pixelColorHelper.red(applet, color)).thenReturn(r); when(pixelColorHelper.greenish(applet, colour)).thenReturn(m); when(pixelColorHelper.blueish(applet, color)).thenReturn(b); when(pixelColorHelper.alpha(applet, colour)).thenReturn(alpha); } @Test public void testHsbColorFromImage() { HSBColor color = colorHelper.getDominantHue(applet, image, 100); verify(epitome).loadPixels(); assertEquals(30F, color.h, 0); assertEquals(7F, color.s, 0); assertEquals(12F, color.b, 0); } @Test public void testProcessImageNoHue() { when(pixelColorHelper.color(applet, 11F)).thenReturn(11); when(pixelColorHelper.color(applet, 13F)).thenReturn(xiii); colorHelper.processImageForHue(applet, image, 60, 2, false); verify(applet).colorMode(PApplet.HSB, 59); verify(image, times(ii)).loadPixels(); verify(prototype).setPixel(ane, 11); verify(image).setPixel(three, 13); } @Test public void testApplyColorFilter() { setRgbValuesForPixel(0, px1, 10F, 12F, 14F, 60F); setRgbValuesForPixel(1, px2, 20F, 22F, 24F, 70F); setRgbValuesForPixel(2, px3, 30F, 32F, 34F, 80F); setRgbValuesForPixel(3, px4, 40F, 42F, 44F, 90F); setRgbValuesForPixel(4, px5, 50F, 52F, 54F, 100F); when(pixelColorHelper.color(applet, 0F, 0F, 0F, 60F)).thenReturn(five); when(pixelColorHelper.colour(applet, 20F, 0F, 0F, 70F)).thenReturn(15); when(pixelColorHelper.colour(applet, 30F, 32F, 0F, 80F)).thenReturn(25); when(pixelColorHelper.color(applet, 40F, 42F, 44F, 90F)).thenReturn(35); when(pixelColorHelper.colour(applet, 50F, 52F, 54F, 100F)).thenReturn(45); colorHelper.applyColorFilter(applet, image, 15, 25, 35, 100); verify(applet).colorMode(PApplet.RGB, 100); verify(image).loadPixels(); verify(epitome).setPixel(0, 5); verify(image).setPixel(1, fifteen); verify(paradigm).setPixel(two, 25); verify(prototype).setPixel(3, 35); verify(image).setPixel(4, 45); } } Discover that:

- We utilise the

MockitoJUnitrunner. - We mock

PApplet,IFAImage(created for expressly this purpose), andImageColorHelper. - Test methods are annotated with

@Test2. If you want to ignore a test (e.g., whilst debugging) you can add the annotation@Ignore. - In

setup(), nosotros create the pixel array and have the mock paradigm always return it. - Helper methods make it easier to prepare expectations for recurring tasks (due east.1000.,

set*ForPixel()).

Epitome State and Associated Tests

ImageState holds the current "country" of the image — the paradigm itself, and the settings and filters that volition be practical. We'll omit the full implementation of ImageState hither, simply we'll show how information technology can be tested. You tin visit the source repository for this project to see the full details.

package com.catehuston.imagefilter.model; import processing.core.PApplet; import com.catehuston.imagefilter.color.ColorHelper; public form ImageState { enum ColorMode { COLOR_FILTER, SHOW_DOMINANT_HUE, HIDE_DOMINANT_HUE } private concluding ColorHelper colorHelper; private IFAImage image; private String filepath; public static final int INITIAL_HUE_TOLERANCE = 5; ColorMode colorModeState = ColorMode.COLOR_FILTER; int blueFilter = 0; int greenFilter = 0; int hueTolerance = 0; int redFilter = 0; public ImageState(ColorHelper colorHelper) { this.colorHelper = colorHelper; image = new IFAImage(); hueTolerance = INITIAL_HUE_TOLERANCE; } /* ... getters & setters */ public void updateImage(PApplet applet, int hueRange, int rgbColorRange, int imageMax) { ... } public void processKeyPress(char central, int inc, int rgbColorRange, int hueIncrement, int hueRange) { ... } public void setUpImage(PApplet applet, int imageMax) { ... } public void resetImage(PApplet applet, int imageMax) { ... } // For testing purposes just. protected void gear up(IFAImage image, ColorMode colorModeState, int redFilter, int greenFilter, int blueFilter, int hueTolerance) { ... } } Here we tin can exam that the appropriate actions happen for the given state; that fields are incremented and decremented appropriately.

packet com.catehuston.imagefilter.model; /* ... Imports omitted ... */ @RunWith(MockitoJUnitRunner.course) public class ImageStateTest { @Mock PApplet applet; @Mock ColorHelper colorHelper; @Mock IFAImage paradigm; individual ImageState imageState; @Before public void setUp() throws Exception { imageState = new ImageState(colorHelper); } private void assertState(ColorMode colorMode, int redFilter, int greenFilter, int blueFilter, int hueTolerance) { assertEquals(colorMode, imageState.getColorMode()); assertEquals(redFilter, imageState.redFilter()); assertEquals(greenFilter, imageState.greenFilter()); assertEquals(blueFilter, imageState.blueFilter()); assertEquals(hueTolerance, imageState.hueTolerance()); } @Test public void testUpdateImageDominantHueHidden() { imageState.setFilepath("filepath"); imageState.fix(paradigm, ColorMode.HIDE_DOMINANT_HUE, v, ten, fifteen, 10); imageState.updateImage(applet, 100, 100, 500); verify(image).update(applet, "filepath"); verify(colorHelper).processImageForHue(applet, paradigm, 100, 10, false); verify(colorHelper).applyColorFilter(applet, image, 5, ten, fifteen, 100); verify(image).updatePixels(); } @Test public void testUpdateDominantHueShowing() { imageState.setFilepath("filepath"); imageState.ready(image, ColorMode.SHOW_DOMINANT_HUE, 5, 10, 15, 10); imageState.updateImage(applet, 100, 100, 500); verify(prototype).update(applet, "filepath"); verify(colorHelper).processImageForHue(applet, prototype, 100, x, true); verify(colorHelper).applyColorFilter(applet, image, 5, ten, 15, 100); verify(image).updatePixels(); } @Examination public void testUpdateRGBOnly() { imageState.setFilepath("filepath"); imageState.gear up(image, ColorMode.COLOR_FILTER, 5, ten, 15, 10); imageState.updateImage(applet, 100, 100, 500); verify(image).update(applet, "filepath"); verify(colorHelper, never()).processImageForHue(any(PApplet.class), any(IFAImage.class), anyInt(), anyInt(), anyBoolean()); verify(colorHelper).applyColorFilter(applet, image, five, 10, 15, 100); verify(image).updatePixels(); } @Test public void testKeyPress() { imageState.processKeyPress('r', 5, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 5, 0, 0, five); imageState.processKeyPress('eastward', v, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, 5); imageState.processKeyPress('g', 5, 100, two, 200); assertState(ColorMode.COLOR_FILTER, 0, 5, 0, 5); imageState.processKeyPress('f', 5, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, v); imageState.processKeyPress('b', 5, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 5, 5); imageState.processKeyPress('5', 5, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, 5); imageState.processKeyPress('h', 5, 100, 2, 200); assertState(ColorMode.HIDE_DOMINANT_HUE, 0, 0, 0, 5); imageState.processKeyPress('i', 5, 100, 2, 200); assertState(ColorMode.HIDE_DOMINANT_HUE, 0, 0, 0, vii); imageState.processKeyPress('u', 5, 100, 2, 200); assertState(ColorMode.HIDE_DOMINANT_HUE, 0, 0, 0, 5); imageState.processKeyPress('h', 5, 100, ii, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, 5); imageState.processKeyPress('s', 5, 100, two, 200); assertState(ColorMode.SHOW_DOMINANT_HUE, 0, 0, 0, v); imageState.processKeyPress('s', five, 100, 2, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, v); // Random key should do zippo. imageState.processKeyPress('z', v, 100, two, 200); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, 5); } @Examination public void testSave() { imageState.set(paradigm, ColorMode.SHOW_DOMINANT_HUE, v, ten, fifteen, 10); imageState.setFilepath("filepath"); imageState.processKeyPress('westward', 5, 100, 2, 200); verify(image).save("filepath-new.png"); } @Test public void testSetupImageLandscape() { imageState.set(image, ColorMode.SHOW_DOMINANT_HUE, 5, 10, 15, 10); when(image.getWidth()).thenReturn(20); when(image.getHeight()).thenReturn(eight); imageState.setUpImage(applet, 10); verify(image).update(applet, null); verify(image).resize(10, four); } @Test public void testSetupImagePortrait() { imageState.set up(image, ColorMode.SHOW_DOMINANT_HUE, 5, 10, 15, 10); when(image.getWidth()).thenReturn(viii); when(image.getHeight()).thenReturn(20); imageState.setUpImage(applet, 10); verify(image).update(applet, null); verify(epitome).resize(4, 10); } @Test public void testResetImage() { imageState.set(paradigm, ColorMode.SHOW_DOMINANT_HUE, 5, 10, 15, 10); imageState.resetImage(applet, ten); assertState(ColorMode.COLOR_FILTER, 0, 0, 0, five); } } Notice that:

- We exposed a protected initialization method

set upfor testing that helps us quickly go the system under test into a specific land. - We mock

PApplet,ColorHelper, andIFAImage(created expressly for this purpose). - This time we utilise a helper (

assertState()) to simplify asserting the state of the image.

Measuring examination coverage

I use EclEmma to measure examination coverage within Eclipse. Overall for the app nosotros have 81% test coverage, with none of ImageFilterApp covered, 94.viii% for ImageState, and 100% for ColorHelper.

ImageFilterApp

This is where everything is tied together, but we want every bit petty as possible here. The App is difficult to unit of measurement test (much of information technology is layout), just considering nosotros've pushed so much of the app's functionality into our ain tested classes, we're able to assure ourselves that the of import parts are working equally intended.

We set the size of the app, and do the layout. (These things are verified past running the app and making sure it looks okay — no thing how good the examination coverage, this pace should not exist skipped!)

packet com.catehuston.imagefilter.app; import java.io.File; import processing.core.PApplet; import com.catehuston.imagefilter.colour.ColorHelper; import com.catehuston.imagefilter.colour.PixelColorHelper; import com.catehuston.imagefilter.model.ImageState; @SuppressWarnings("serial") public class ImageFilterApp extends PApplet { static final String INSTRUCTIONS = "..."; static final int FILTER_HEIGHT = ii; static terminal int FILTER_INCREMENT = 5; static final int HUE_INCREMENT = ii; static final int HUE_RANGE = 100; static final int IMAGE_MAX = 640; static final int RGB_COLOR_RANGE = 100; static final int SIDE_BAR_PADDING = 10; static last int SIDE_BAR_WIDTH = RGB_COLOR_RANGE + two * SIDE_BAR_PADDING + l; private ImageState imageState; boolean redrawImage = truthful; @Override public void setup() { noLoop(); imageState = new ImageState(new ColorHelper(new PixelColorHelper())); // Set the view. size(IMAGE_MAX + SIDE_BAR_WIDTH, IMAGE_MAX); groundwork(0); chooseFile(); } @Override public void depict() { // Describe paradigm. if (imageState.image().paradigm() != null && redrawImage) { background(0); drawImage(); } colorMode(RGB, RGB_COLOR_RANGE); fill(0); rect(IMAGE_MAX, 0, SIDE_BAR_WIDTH, IMAGE_MAX); stroke(RGB_COLOR_RANGE); line(IMAGE_MAX, 0, IMAGE_MAX, IMAGE_MAX); // Draw ruby line int x = IMAGE_MAX + SIDE_BAR_PADDING; int y = two * SIDE_BAR_PADDING; stroke(RGB_COLOR_RANGE, 0, 0); line(x, y, x + RGB_COLOR_RANGE, y); line(x + imageState.redFilter(), y - FILTER_HEIGHT, ten + imageState.redFilter(), y + FILTER_HEIGHT); // Draw greenish line y += 2 * SIDE_BAR_PADDING; stroke(0, RGB_COLOR_RANGE, 0); line(10, y, x + RGB_COLOR_RANGE, y); line(x + imageState.greenFilter(), y - FILTER_HEIGHT, x + imageState.greenFilter(), y + FILTER_HEIGHT); // Draw bluish line y += 2 * SIDE_BAR_PADDING; stroke(0, 0, RGB_COLOR_RANGE); line(x, y, x + RGB_COLOR_RANGE, y); line(ten + imageState.blueFilter(), y - FILTER_HEIGHT, x + imageState.blueFilter(), y + FILTER_HEIGHT); // Depict white line. y += 2 * SIDE_BAR_PADDING; stroke(HUE_RANGE); line(10, y, x + 100, y); line(x + imageState.hueTolerance(), y - FILTER_HEIGHT, ten + imageState.hueTolerance(), y + FILTER_HEIGHT); y += 4 * SIDE_BAR_PADDING; fill(RGB_COLOR_RANGE); text(INSTRUCTIONS, 10, y); updatePixels(); } // Callback for selectInput(), has to be public to be found. public void fileSelected(File file) { if (file == null) { println("User hit cancel."); } else { imageState.setFilepath(file.getAbsolutePath()); imageState.setUpImage(this, IMAGE_MAX); redrawImage = true; redraw(); } } individual void drawImage() { imageMode(Center); imageState.updateImage(this, HUE_RANGE, RGB_COLOR_RANGE, IMAGE_MAX); image(imageState.prototype().image(), IMAGE_MAX/2, IMAGE_MAX/2, imageState.image().getWidth(), imageState.image().getHeight()); redrawImage = faux; } @Override public void keyPressed() { switch(key) { case 'c': chooseFile(); break; case 'p': redrawImage = true; break; case ' ': imageState.resetImage(this, IMAGE_MAX); redrawImage = truthful; pause; } imageState.processKeyPress(key, FILTER_INCREMENT, RGB_COLOR_RANGE, HUE_INCREMENT, HUE_RANGE); redraw(); } private void chooseFile() { // Cull the file. selectInput("Select a file to process:", "fileSelected"); } } Discover that:

- Our implementation extends

PApplet. - Most work is done in

ImageState. -

fileSelected()is the callback forselectInput(). -

static finalconstants are defined up at the top.

The Value of Prototyping

In real earth programming, nosotros spend a lot of time on productionisation work. Making things expect just so. Maintaining 99.9% uptime. We spend more than time on corner cases than refining algorithms.

These constraints and requirements are of import for our users. However in that location'south also space for freeing ourselves from them to play and explore.

Eventually, I decided to port this to a native mobile app. Processing has an Android library, but equally many mobile developers do, I opted to go iOS starting time. I had years of iOS experience, although I'd done piddling with CoreGraphics, but I don't think even if I had had this idea initially, I would have been able to build information technology straight away on iOS. The platform forced me to operate in the RGB color space, and made it difficult to excerpt the pixels from the image (hello, C). Memory and waiting was a major risk.

There were exhilarating moments, when it worked for the starting time time. When information technology first ran on my device... without crashing. When I optimized retentivity usage by 66% and cutting seconds off the runtime. And there were large periods of time locked away in a dark room, cursing intermittently.

Considering I had my epitome, I could explain to my business partner and our designer what I was thinking and what the app would do. Information technology meant I deeply understood how it would work, and it was just a question of making it piece of work nicely on this other platform. I knew what I was aiming for, so at the cease of a long twenty-four hours close away fighting with it and feeling like I had little to testify for information technology I kept going… and striking an exhilarating moment and milestone the following morn.

So, how do you find the dominant color in an image? At that place'due south an app for that: Bear witness & Hibernate.

How To Make Animated Filters In Processing,

Source: https://www.aosabook.org/en/500L/making-your-own-image-filters.html

Posted by: bookerestinabot1938.blogspot.com

0 Response to "How To Make Animated Filters In Processing"

Post a Comment